Trace comparison helps you understand why two executions of the same workflow produced different results, latencies, or costs. This is useful for debugging regressions, A/B testing prompts, and analyzing performance variations.Documentation Index

Fetch the complete documentation index at: https://docs.getnetra.ai/llms.txt

Use this file to discover all available pages before exploring further.

When to Compare Traces

- Debugging regressions - Compare a failing trace with a successful one to spot differences

- A/B testing - Compare traces from different prompt versions or model configurations

- Performance analysis - Identify why some requests are slower than others

- Cost optimization - Compare token usage across different approaches

Accessing Trace Comparison

- Navigate to Observability → Traces in the Netra dashboard

- Select the first trace you want to compare by clicking on it

- Hold

Shiftand click on the second trace, or use the checkbox selection - Click the Compare button in the toolbar

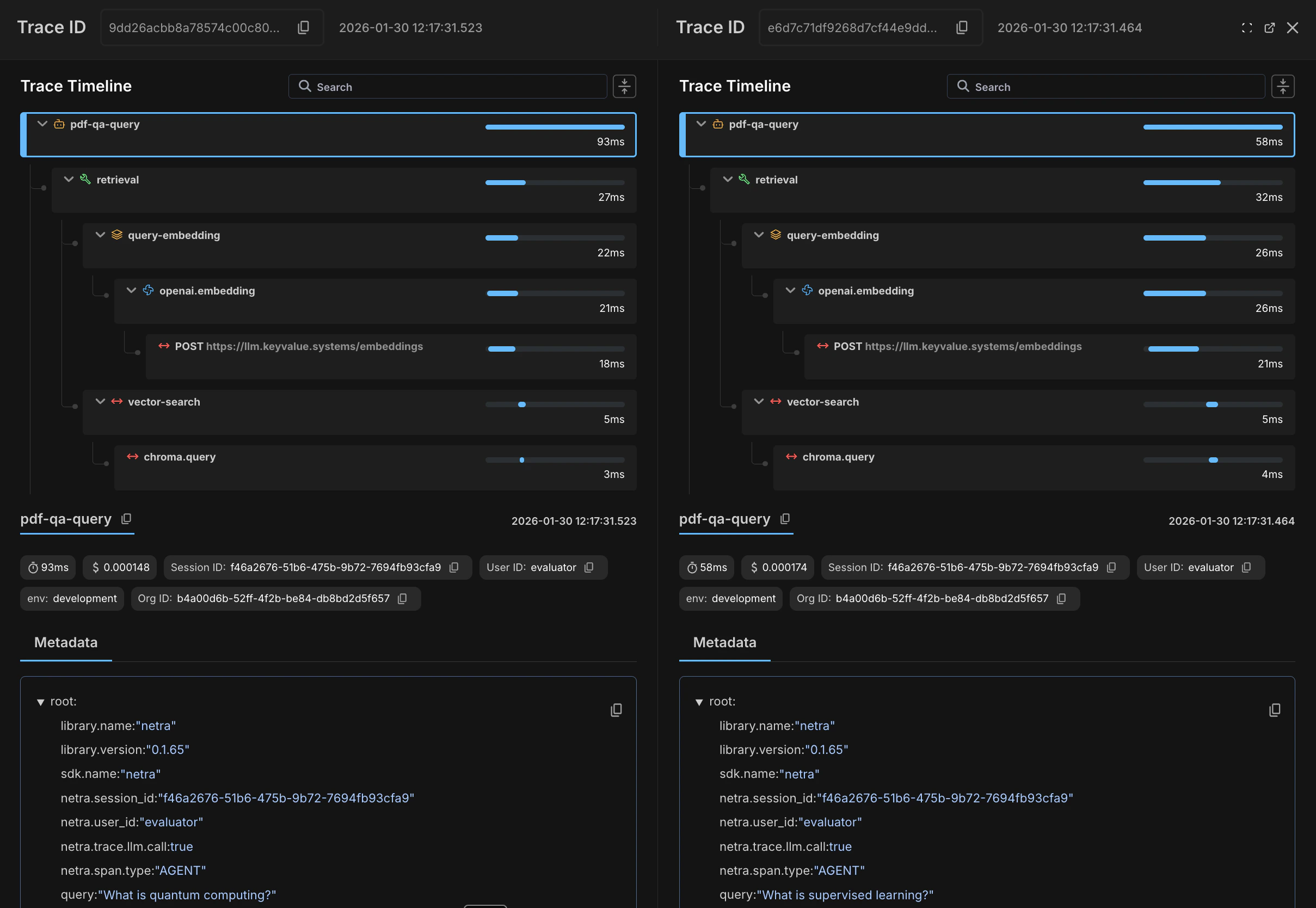

Understanding the Comparison View

The comparison view displays two traces side-by-side, making it easy to spot differences in execution flow, timing, and outcomes.

- Trace ID and timestamp at the top of each panel

- Hierarchical span structure showing the full execution flow (e.g.,

pdf-qa-query→retrieval→query-embedding→openai.embedding) - Duration for each span displayed on the right side

- Color coding — error spans are shown in red, successfully executed spans in green

Comparison Scenarios

Scenario 1: Debugging a Slow Request

You notice some requests taking 5x longer than usual. Compare a slow trace with a fast one:- Filter traces by duration to find a slow example

- Find a similar request with normal duration

- Compare the two traces

- Spans that are significantly slower in one trace

- Additional spans in the slow trace (retry loops, fallbacks)

- Different code paths being executed

Scenario 2: Comparing Prompt Versions

You’ve updated a prompt and want to verify the change:- Run both prompt versions with the same input

- Capture the trace IDs or find them by timestamp

- Compare the traces

- Token count differences (is the new prompt more efficient?)

- Response quality differences in the completion content

- Latency changes

Scenario 3: Investigating Failures

A request that usually succeeds is now failing:- Find a recent failed trace

- Find a recent successful trace for the same operation

- Compare to identify the divergence point

- The span where the error occurred

- Input differences that might have caused the failure

- Missing or different API responses

Tips for Effective Comparison

- Use consistent naming - Ensure your spans have consistent names across traces so they align properly in comparison view.

-

Add identifying attributes - Include attributes like

request.id,user.id, orexperiment.variantto make traces easier to find and compare. - Compare similar operations - Comparing traces from completely different workflows won’t be useful. Filter to the same operation type.

- Check timestamps - Ensure you’re comparing traces from similar time periods to avoid infrastructure differences.

Exporting Comparison Data

To share comparison results with your team:- Click Export in the comparison view

- Choose your format:

- PDF - Visual report with timeline and metrics

- JSON - Raw data for programmatic analysis

- The export includes both traces and highlighted differences

Next Steps

- Custom Trace Views - Create saved views to quickly find traces for comparison

- Traces Overview - Learn more about traces and spans